The Simple Geometry Behind 3D Travel Photos

Striking travel photos feel three-dimensional not because of camera specs but because photographers stack foreground, midground, and background to trigger depth reconstruction in the brain.

Striking travel photos feel three-dimensional not because of camera specs but because photographers stack foreground, midground, and background to trigger depth reconstruction in the brain.

Succulents keep leaves plump through water-storing tissues, waxy cuticles, CAM photosynthesis, and hydraulic controls that limit loss in extreme aridity.

2026-04-27

Modern bungee jumping posts far lower fatality rates than road travel, yet its engineered safety margin is so narrow that a single millimeter error in rope, harness, or anchor can still be deadly.

2026-05-09

Racing a beach buggy on sand can flood the body with dopamine and adrenaline through speed, instability, and sensory overload, activating reward and stress circuits similar to intense strength training.

2026-05-14

Microgravity lets the spine stretch and blood shift upward, making astronauts taller while reducing cardiac workload enough to shrink heart mass.

2026-04-27

Paddling fast water drives extreme perceptual load, quiets the default mode network, spikes dopamine and endorphins, and ends as a meditative reset for attention and mood.

2026-04-27

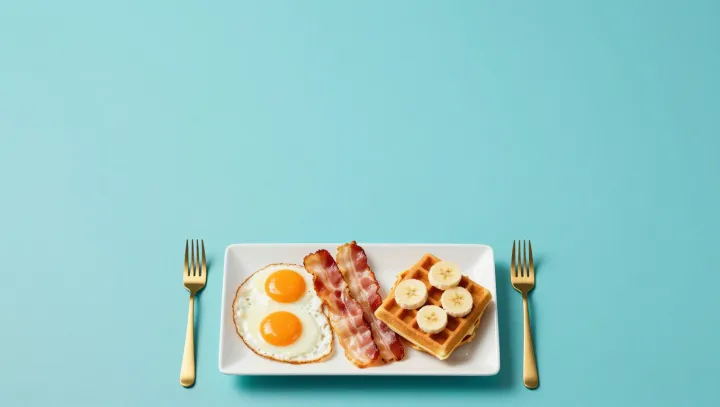

New research shows that missing breakfast measurably weakens attention, working memory and decision‑making within a single morning, as changes in glucose regulation and neural efficiency show up in lab tests.

2026-05-09

Many drivers lose up to 20% fuel in automatics by staying in Drive and pushing harder, instead of using coasting, light throttle and manual mode to trigger built‑in fuel‑cut logic.

2026-04-28

Namcha Barwa, the so‑called Great Bend’s Gate, is lower than Everest yet far more lethal, as isolation, violent weather and unstable geology trap climbers in a near‑closed arena.

2026-04-20

Top minimalist interiors use visible emptiness as a visual buffer, lowering cognitive load and helping the brain segment, predict, and relax inside a room.

2026-04-28

Opened coconut water can look clear and taste sweet while silently supporting rapid microbial growth, thanks to its nutrients, mild acidity, and cold-tolerant pathogens.

2026-05-13